OK found my way back home after a fantastic week in Las Vegas at the annual HPE Discover event…

Since I was asked to teach twice the Master ASE Storage class to get +50 people certified with the highest level of storage certification (and most actually did pass the exam, congratulations!), I did not really have a lot of time during the event for blogging on all the news. That’s why I write this article just now…

There are always the typical announcements bigger/better/more:

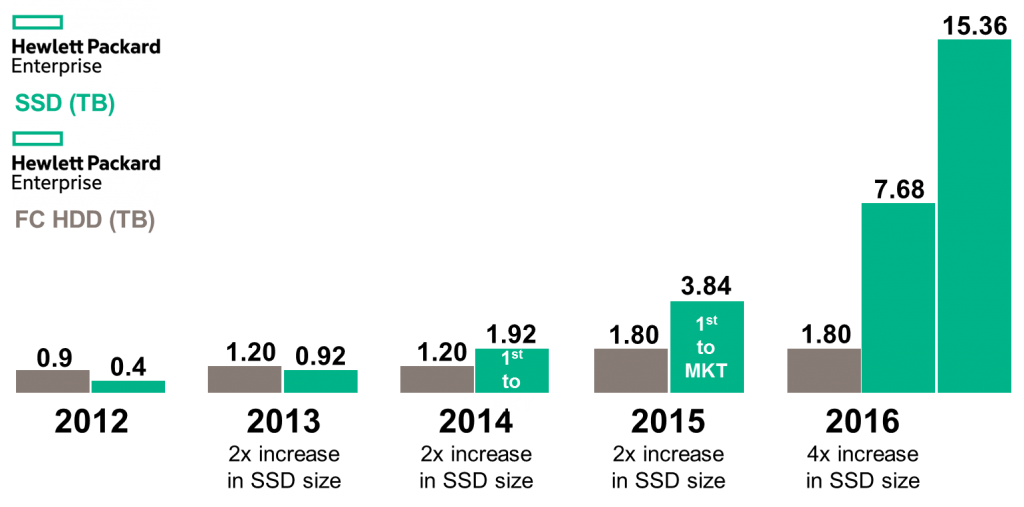

Bigger SSD’s for 3PAR

Currently the largest drive available in a 3PAR system is/was 3,84TB. At Discover are not 1 but 2 new drives announced being 7.68TB and the huge 15,36TB SSD drive. It lowers the cost per GB even lower than a 10K SAS drive, somewhere below 1,2$/GB usable compared to 1,5$ for a SAS drive.

However some side notes on this.

In the past 5 years the density of performance HDD’s doubled. For SSD’s it is 38 times. Insane… How long will the SAS drive stay there? I won’t be impressed with the next 2,4TB SAS drive someday… 😉

On top of that, with features like Deduplication you can even store more than 15,36TB on that drive… Not an option for SAS drives, SSD only feature…

We should not forget that these sizes are good for customers who require capacity. If you do the math you should need 64 x 1,92TB drives or 32 x 3,84TB drives for a certain capacity which you can cover now with 16 x 7,68TB or even 8 x 15,36TB drives. Since a lot of licensing is based on drive count in a 3PAR system, the larger capacity drives will be famous.

However! On performance side I still prefer the 64 SSD’s since you will get a higher performance count with 64 SSD’s compared to 8 SSD’s only. So it will be up to you to find the right spot between capacity and performance. Luckily HPE has the right tools for this (Ninjastars).

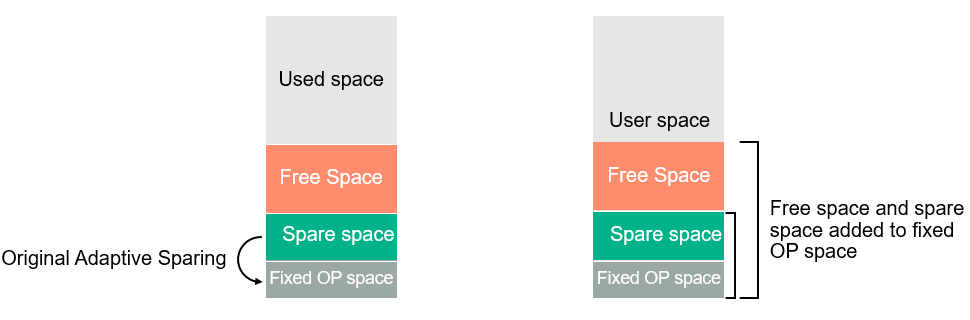

Adaptive Sparing 2.0

The next version of Adaptive Sparing was announced as well which increases the effective overprovisioning space even further from every SSD inside a 3PAR system. This is achieved by extending overprovisioning into user space that’s not currently being used by the array. If space is required for volumes, it is instantly made available to the system without performance impact I will write an article soon with more in-depth technical information in this.

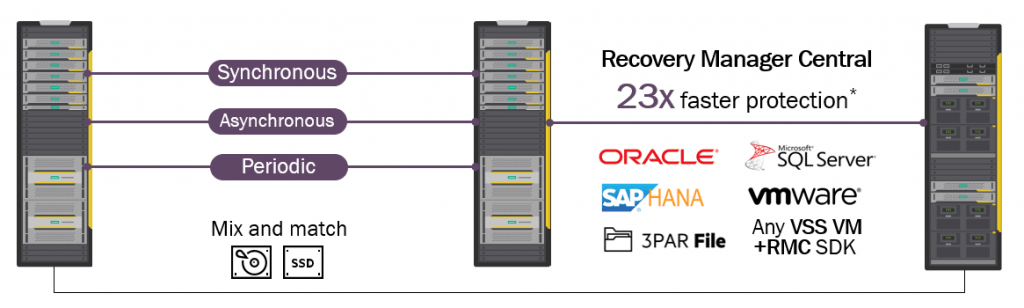

RMC 3.0

Also a new version from Recovery Manager Central was announced. New version 3.0 enables now flexible replication to StoreOnce for Oracle and SAP/HANA. In the previous versions there was already support for VMware and SQL Server. Also on RMC there is a more in-depth article on its way…

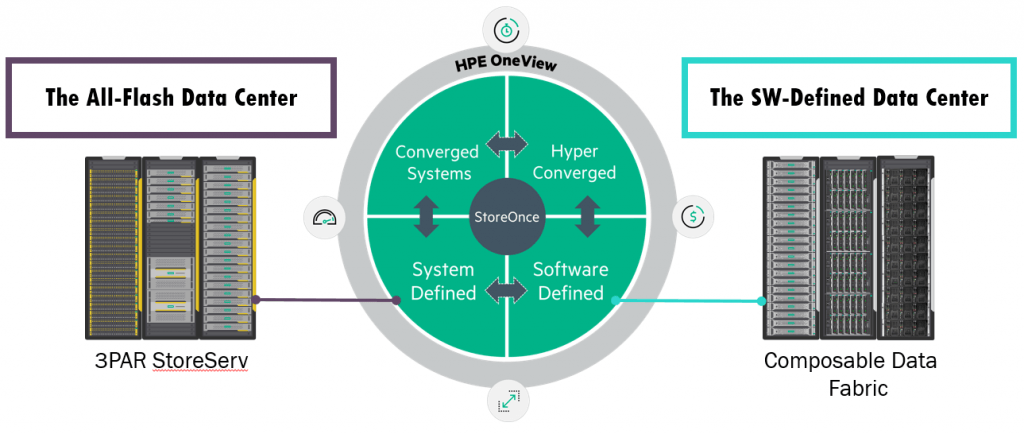

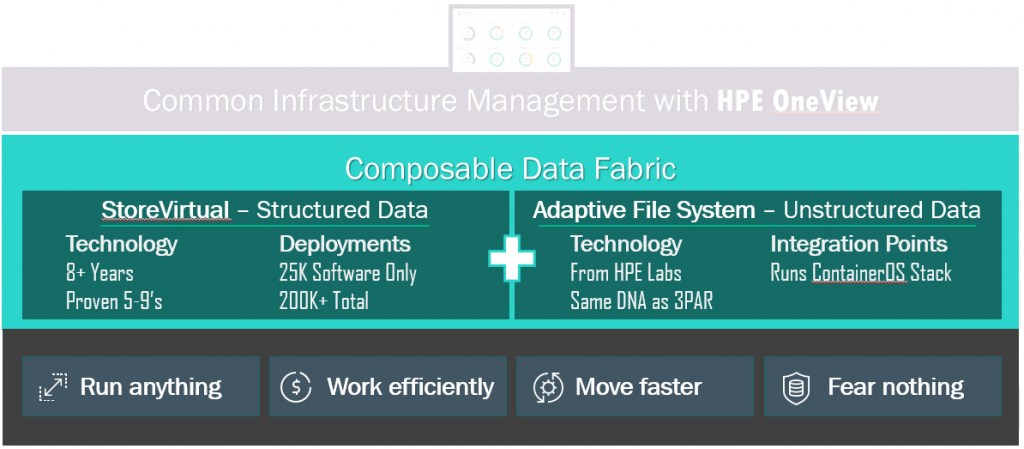

Composable Data Fabric

However for me the biggest ‘announcement without products yet’ was the Composable Data Fabric.

This is today more a vision than a real product launch, but I like a lot the idea of one Data Fabric with 3PAR for the All-Flash Data Center and the Composable Data Fabric for the SW-Defined Data Center all managed by one tool being HPE OneView.

One interface, 1 feature set, any workload in a federated setup. All you need in 1 storage platform.

What I like the most is that HPE (finally) pushes their VSA in the picture where it should have been already for years. I am quite a fan of this product seen the various articles I have published already about it and all the customers here around in Belgium using VSA technology…

So there are no actual product launches yet, but what I notice is the StoreVirtual platform even more in the picture for structured but also unstructured and file data… This would mean file and object storage would come besides the block storage already there.

2016 will be a fun (storage) year, believe me!

Be social and share!